Learn How To Use Data to Build and Grow Hit Games - Part 1

This two part series on how to build and grow products with data is written by, Oleg Yakubenkov, CEO @ GoPractice. Oleg has built his data driven product management expertise working on some of the biggest games as well as some of the biggest social platforms in the World.

this series is written by Oleg Yakubenkov, CEO @ GoPractice

GoPractice is a tool I would have loved to have at the beginning of my product management career and it’s a tool that I love having now, a decade into making and operating free-to-play games. GoPractice is also highly recommended by some of the top mobile games companies, which is how I originally got connected with Oleg.

GoPractice, offers an absolutely unique approach for training your product management chops, whether you’re you’re currently working as a product manager or training to be one.

Go practice is an online simulator course that will give you hands-on-like experience working on an ambitious product and making decisions based on real data in actual analytics systems.

All of our readers will get 10% by following this link or mentioning “DoF” as a reference.

Here’s how I view data-driven culture

In my opinion, most teams working on mobile games don’t fully use the potential of data. They tend to track topline metrics, measure effectiveness of paid marketing campaigns, analyze the impact of product changes all while running meticulous AB tests. This may sound like enough, but it really isn’t. Not if your goal is to climb onto the top of the grossing charts and stay there.

There are many more ways how data can increase your chances of building and operating a successful mobile game. The key is to stop thinking of data as a way to look back at what you have done, but instead start using data as a tool that can help you make decisions, decrease uncertainty and remove main product risks as early as possible.

In this post, I will walk you through a few examples of how data can drive key product decisions at different stages of product development cycle. But first, let me tell you a story…

#1 Test the marketability even before you start the development

In 2014 I was working on a mobile game in which players fought each other on an ice field. Each player had a team of three warriors, whom they threw to challenge the rivals’ teams. It was supposed to be a game with a synchronous multiplayer mode.

This is how our game looked like in the beginning. No cats…

For the first version of the game, we decided to have only a single-player version, where the players would fight against the AI. This decision was made to save development time and test the game earlier. We planned to add the multiplayer mode in the future update. But it still took us 4 months to get from the idea to the test launch.

Soon after launching the first version of the game we analyzed the key metrics and here’s what we learnt:

The game had a good short-term retention (Day 1 retention rate > 45%), but by the 14th day, the retention rate would fall. This was no surprise as there was little content in the game.

The monetization didn't look that bad. And, there were great possibilities for improvements.

However, we had huge problems with user acquisition. The choice of a gameplay and the visual style were solely based on the team’s gut feeling. As a result, the CPI (cost per install) from Facebook ads was as high as $15 in Australia. It was simply too high for a game that was on the casual end of mid-core games. And no, we didn’t do any optimization of creatives, that could have brought the CPI down.

While these are all great learnings to acquire in mere 4 months of development work, the truth is that we did not have to build the game to find out that the user acquisition will be a problem and that the players (market) didn’t want our game. But we were blinded by our initial game idea and believed that it would work. No one tried to challenge whether it was worth building what the team originally had in mind.

In hindsight, we should have used the Geeklab platform or any other similar tool to test the market’s interest for the product we wanted to build. Geeklab allows developers to make a fake app store page and check the acquisition funnel without publishing the app in the app stores. All that is required for a test like this is few banners, and icon, a description of the game, and some illustrations shown as screenshots of “the game”.

Geeklab is your go-to solution to analyze and create AppStore and Google Play product pages.

editors note:

I’ve personally used several different services to test marketability and Geeklab has been by far the most accurate and cost efficient of them all. During the writing of this post, we connected with the folks behind Geeklab and they agreed to offer a free month to their service for all of our readers. That’s how confident they (and we) are in their platform.

Use this link to get your free month and start testing themes!

Would we have started a full-stack development if we knew that the CPI would be $15? I doubt it. If we knew that there and then, the right approach would have been to focus first on finding ways to reduce the CPI dramatically. If we had failed to reduce the CPI, we would have stopped the project without spending hundreds of thousands of Dollars in development costs – not to mention the opportunity costs.

Before we started the development of our next game, we made a lot of versions of the art style and tested the market interest. The best version was performing several times better than the second best. This test gave the project a huge advantage – the user acquisition costs were way lower than the market average, and we were able to prove from the get go that the market wanted the game we were planning to build.

This second game was CATS. It would get over 100 million downloads and several recognitions, such as being nominated as the best game of 2017 by Google Play.

By starting off with a theme test we were able to secure low CPIs before kicking off the development. This laid an incredible foundation for our project and allowed us to claw up to 100M installs (pun intended)

This story is just one of the examples of how data can help to reduce uncertainty, allow to invest resources smarter and increase chances of building a successful game.

If you’re still on the fence, let me turn you into a believer with more examples...

#2 Use data to find your niche in the market

A few years ago, I wanted to learn more about ASO (App Store Optimization). As with most things, the best way to learn is through practice and that is why I decided to build a game with a goal of growing it through organic traffic.

At that moment I faced the questions every game maker faces – how to choose what game to build? This is an important question, because if you choose the wrong market segment or overestimate your resources – you will most likely fail.

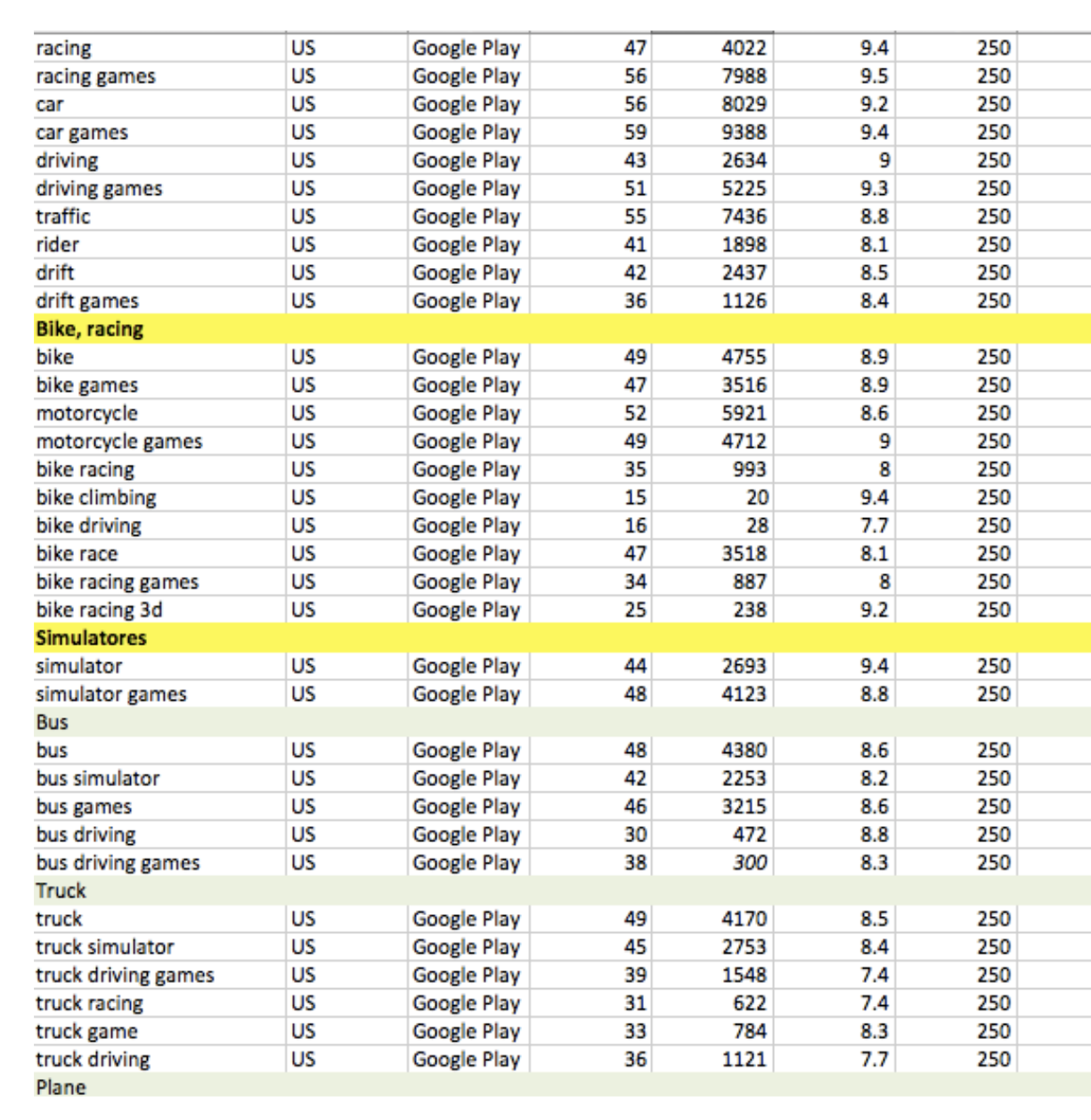

I started researching the market to understand what are the market segments where games rely mostlyon organic traffic. Then I spent a week analyzing each of the segment: Who are the leaders in the segment? How do they get organic traffic? What are the potential ways to outperform them? I also spent a lot of time creating and analyzing the semantic core of each niche that looked promising:

After the analysis, I decided to build a Truck Simulator game. There were a few data-backed reasons behind this choice:

It was the niche with a lot of organic traffic. Most importantly, it was a growing niche. Top games inside the segment were getting millions of downloads per month (I used App Annie and Datamagic for the analysis).

Even though there were clear leaders in this segment, there still were many apps with more than 100k installs per month. That meant that there were many different ways how the games got organic traffic.

Most games had low production values and monetized through ads. That meant that their LTV was low, and they could not afford paid user acquisition. This was good news for me, as I could not afford paid UA either.

These games didn’t acquire users through paid ads. They were not featured by app stores either. They survived purely on organic traffic from searches and occasional app store's recommendations.

All the games looked and played largely the same. The gameplay was very simple: user gets a truck and drives around the area delivering goods. Neither gameplay, nor controls were adapted to a mobile devices.

Understanding all this I started thinking about the game I could build that would give me an advantage over a slew of largely identical competitors. I ended up creating the Epic Split Truck Simulator – a casual arcade game with a fun, and to some extent, hardcore gameplay. It was inspired by the famous Volvo truck ad where Jean-Claude van Damme made a split between two moving trucks.

The game was built over at weekend and as of today raked over 2 million downloads (99% of them organic). The main reason why the game grew was that it outperformed the competitors in store conversion and short term retention. That is also why Google Play decided eventually to recommend our game instead of any of our competitors’ games.

The key lesson here is that a deep analysis of the market you want to enter is crucial for a future success. It will inform your decisions and help you focus on the right things. Here are the questions worth thinking about before you get into full-on development:

Is this segment of the market growing or stagnating? Needless to say, it’s always easier to work in a fast-growing market.

How strong is the competition? Is it the sub-category dominated by one or two games or are there many games with small and medium market shares? Do the category leaders change quickly, or do they remain on the top for a very long time? You’re looking for a market that is not dominated by one or two games leaving only crumbs to the others. A market where the top games change from time to time offers potential for newcomers.

How did the leading games in the sub-category achieve their positions? Did it require aggressive paid marketing, or do they also accumulate a lot of organic traffic? Are the games regularly featured, or are they growing through cross-promotion inside the portfolio of the same publisher? What is the LTV, RPI, and retention rate of the top games in the category? Are they top of mind games? What is the arte styles of these games?

How and in which areas is your game going to beat your competitors? What is the added value of the game you are building? Will you find a way to grow through paid ads on the market where everyone else is getting organic traffic? Do you know how to significantly improve the LTV compared to the rest of competitors? Are your product and/or marketing improvements sustainable or are they something that other games in the category can quickly replicate?

Answering these questions will help you focus on the right things. You have to do something better and/or differently to be able to win a meaningful market share.

This is how I chose the positioning of my game. Back then, Deconstructor of Fun wasn’t sharing yearly predictions based on detailed gaming taxonomy….

Deconstructor of Fun offers yearly a great analysis of the mobile market that helps to identify where you should and shouldn’t compete:

2019 Predictions: #1 Puzzle Games Category

2019 Predictions: #2 the Hyper-Casual Games

2019 Predictions: #3 Simulation & Customisation

2019 Predictions: #4 Location Based Games

2019 Predictions #5 RPG

2019 Predictions #6: Card Battlers

2019 Predictions #7: Strategy Games

2019 Predictions #8: Social Casino

2019 Predictions #9: Battle Royale

editors note: We’re planning to launch Mobile Market Monthly for companies - a subscription service that will give you all the relevant insights (both product and marketing) to make strategic decisions on where to compete and how. Let us know if you’d like to be one of our pilot customers. We have only a couple spots left.

#3 Spot the early signs of success - or imminent failure

I have participated in making a few games, which became hits: King of Thieves (over 75 million downloads, Apple’s Editors choice), C.A.T.S. (over 100 million downloads, rated best game of 2016 by Google Play) and Cut the Rope 2. I have also worked on many more games that never made it.

My experience led me to identify a couple of early signs that a game has problems. I found it useful to monitor these signs from the early development stage onward when teams tend to be over-optimistic. Just keep in mind, that these are signs rather than definitive symptoms.

Sign 1: Testers can’t stop playing your game

I find testing an early version of a game on the Playtestcloud very useful.The way Playtestcloud works is that it requires a player (which you choose based on various segmentations) to play your game for 30 minutes straight. The test is recorded by recording the screen, the touch points and the microphone of the tester. This test provides you with a very useful qualitative data and gives you a chance to see the games through your players’ eyes. I also like the test as it is done in the comfort of the tester (usually at home) instead of bringing testers to your studio or some research center, where their behaviour alters.

I noticed an interesting pattern with the Playtestcloud tests: testers spent more than 30 minutes in a game that would later prove to be successful. The testers simply got really engaged with the early version and didn’t want to stop playing. On the other hand, in the games that were later killed, the testing sessions lasted exactly the required 30 minutes or less.

Sign 2 – The development team doesn’t play their own game

It’s a great sign if you get a community of people inside the company who play the early versions of your game and give you a lot of feedback as well as send you ideas regarding the game. Especially if a significant part of this community is not part of the team building this game. On the opposite – when even the team members avoid playing the game during their free time and do so only because that’s their job–most likely it’s usually because something is off and the game is simply not engaging enough.

These are not scientifically proven signs. Then again, building games is not a scientific process either. Most likely you will notice other similar patterns that will work for you and will help you navigate through the uncertainty in the early days of building a new game.

I definitely do not recommend killing the game if testers fail to spend over 30 minutes playing it or if there is no engaged community in your company around the early versions of the game. I also don’t recommend keeping a game alive even if the development team really loves it despite all other signs pointing that the game is a dud. These are just signs that something might be going in the wrong direction.

#4 understanding = segmentation + analysis

It’s time for yet another story. This time about a game called King of Thieves, which has more than 75 million downloads as of today. When we launched the first version of this game after 16 months of development the early metrics looked really bad, with D1 retention rate of 26%, which declined down to mere 9% after 7 days. Monetization-wise, things didn’t look any better. It took four days before the first purchase was made. Low conversion and low retention tend to make a pretty bad combo...

Based on this data we should have killed the game, but we decided to invest more time into understanding what was going wrong. We knew that the game was fun–we had a big community inside the company who played the game while we had been building it. Not to mention that the playtests on Playtestcloud usually lasted for more than the required 30 minutes.

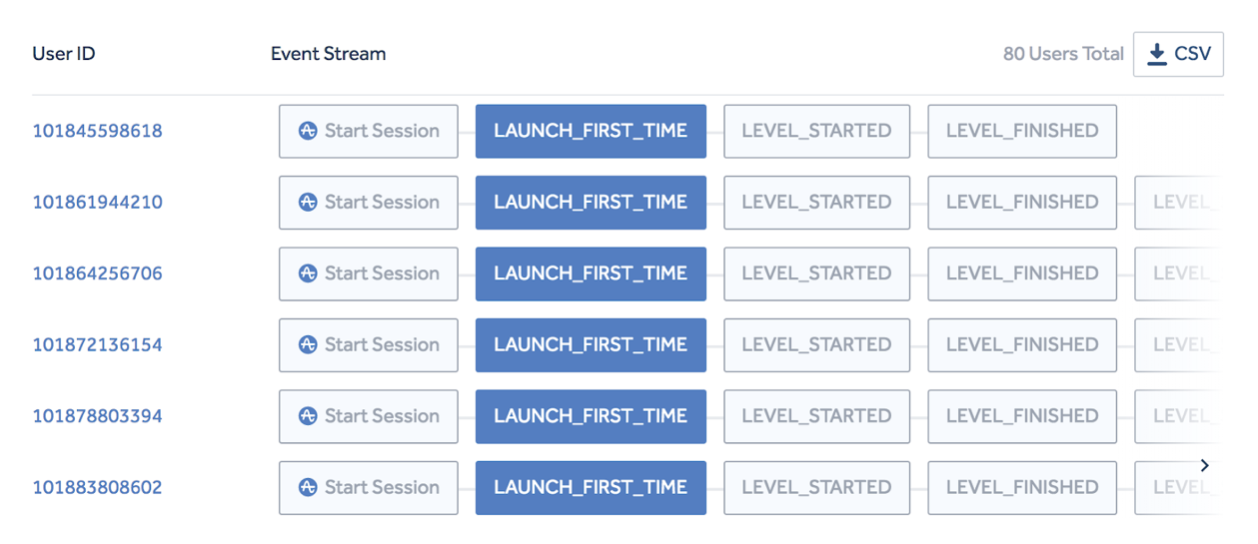

To understand the reasons behind the poor KPIs we started comparing the paths of those users who would retain and those who would churn. To do that, we got a random sample of successful and unsuccessful users and then manually analyzed the sequences of their in-game events. There is a great tool in Amplitude that allows you to do that:

With the use of Amplitude we were able to segment our players based on behaviour and refocus our game to incentivise players to play like our most engaged segments. This data-based approach turned a failed game into a successful one taking D7 from 9% to 20%.

We quickly noticed that retaining players discovered the Player versus Player (PvP) very quickly and spent most of their play time fighting others. On the other hand players who churned never attacked other players. Upon discovering this crucial insight we calculated that 60% of the new users never tried a multiplayer – the main and the most exciting part of the game’s core loop.

We changed the onboarding flow to force players to engage with the PvP mode and the impact was immediate - D1 retention soared to 41%, while the D7 rate increased to a far healthier 20%.

The main lesson here is that you should strive to understand what are the key elements that make your players retain. One of the best tools to do that is to manually analyze the sequence of events of retaining and churning players.

A deep understanding of what exactly keeps your users engaged is the key for the future development of the game. As soon as you understand the core element of your game–all of the new features you add should amplify this. Also, looking back at it, we should have understood that the PvP was the main driving element in King of Thie. After all, that was the game mode everyone engaged with internally during the development of the game.

#5 Test early, test hard

In my opinion, a mistake that many teams make is acting with too much caution in the early days of working on a new game. They avoid running risky experiments and they don’t attempt to shift the game into different directions.

There are a few reasons why I advocate for aggressive early experimentations:

Every game is like a new universe with its own set of laws. You have to learn these laws from scratch and the best way to do this, in my opinion, is to run experiments.

Another important thing is that in the early days you have only a few users so you can run aggressive experiments without causing any uncorrectable problems. In other words, early stage is the perfect time to make mistakes and learn from them. Even if the experiment turns out a big mistake it will help to learn more than it causes harm - after all, we’re talking about games with merely a few hundreds of users. That’s definitely not the case when the game has scaled up.

You have to test things that can have a significant impact on the metrics to be able to get statistically significant results. Otherwise you won’t be able to learn anything from an experiment. Early on, your player base isn’t yet big enough to acquire statistical significance in experiments.

But let’s get back to King of Thieves. After changing the onboarding flow and fixing the retention rate we still had big problems with the monetization. We were far from being ROI positive (we couldn’t be any further, to be honest). And that is when we started experimenting and looking for the levers that could impact monetization and uncover the right product direction we needed to take.

One of the first things we did was running an experiment where we increased all the timers in the game by a fivefold and greatly increased the cost of IAPs for half of our users. Our players were not happy with that decision. This resulted in King of Thieves plummeting to a 2-star store rating. But at the same time, the LTV increased by 2.5 times. Would we have dared to run a similar experiment in the game with millions of users? I don’t think so.

We did a lot of crazy experiments during the soft launch, which helped us understand the product better. In the end we found the levers that had a clear impact on the monetization. By the moment of the global launch the LTV had improved by more than 40 times compared to the first version of the game.

In my opinion, experiments should not be limited to testing new features and measuring their impact. Experiments can also help your team understand their game, see its potential, learn how to affect it and quickly test the directions the game can take.

Rapid and Constant Beats the Race

There are many ways how data can increase your chances of building and operating a successful mobile game. The key is to stop thinking of data as a way to look back at what you have done, but instead start using data as a tool that can help you make decisions, decrease uncertainty and remove main product risks as early as possible.

Experiment often and experiment early - even before you actually start the development. And never underestimate the importance of consistently improving the product through marginal gains. The quality of such work is the factor that often separates the best products from those that lag behind.