From the Trenches: How to Grow a Live Game

This is a guest post by Dylan Tredrea. He was a PM at Disney, ran the BI department at Geewa, and is currently a Senior Product Manager at Rovio. Hit him up on LinkedIn or twitter and let him know how you liked the post!

No one cares.

After weeks of analysis, seemingly endless debate, and an incredible amount of effort from the entire team the big clan update for is finally live. And no one cares. The feedback on the forums can be best summed up as: “Lame update! Give us more free gems!”

No one is spending more money. No one is returning to the game more often. People who already play the game aren’t playing longer or more times each day. There’s no lift in the collection or consumption of related currencies. Digging deeper in hopes of finding any measurable impact, the team pulls the gameplay data related to clans hoping to see increased engagement, but alas, again there is no change. Despite careful research & analysis, a design eagerly approved & championed, and the pile of experience and wisdom everyone on the team threw into the update… No one cares. Welcome to running a live game!

Of course the above is merely a generic, fictional example, but anyone with a bit of experience running live games can surely relate.

Running a Live Game

Running a live game is the process of updating a game with client updates and ‘live operations’ (changes made with server controls that don’t require a client update). Back in the day games shipped in a box and that was it, but today many games are live experiences continually being updated and tweaked online. The team running the game must choose a few ideas--usually out of hundreds--to implement. If the game is in full, active live development the big question is typically: “What will have the most impact for the most people, with the goal of sustaining the product and growing the business?” The first, most important, and hardest lesson (that frankly only gets a bit easier with time) is even if you’re doing well players will not care about the vast majority of changes. That is to say there will be no measurable change in player behavior.

This humble starting point is the foundation of running a live game. It’s not possible to run a live game well without a crystal clear understanding of this truth. A game where each shot has a 30% chance of success should be played very differently than a game where each shot has a 5% chance of success. Overconfident teams over-invest in big ideas, undervalue generating learnings as quickly as possible, and skip over building the tools and practices to manage risk.

For example, say a team wants to add more events focused on competition between clans based on the hypothesis that increased clan competition leads to increased spending. An overconfident team would dive right in and build the events. A well-run, humble team would first utilize server controls with the existing clan events to test the hypothesis right away. This being possible because they made sure to include robust server controls when shipping the first version of the events. They change the reward scheme so first place rewards are double or triple their current setting giving a big, clear incentive to be more competitive.

Additionally, they use in game communication tools to clearly communicate this is happening and that the change is temporary (‘Mega Champions Rewards Week!’ or the like). Community and customer support teams are in the loop, on message and ready to collect, analyze, and share aggregated qualitative feedback. The team may also send out in game surveys to various players segments for additional qualitative data that can be combined with quantitative data of the same users. The end result is that long before spending scarce development resources on building the content a well-run, humble team is able to have a much better understanding of whether or not this content will truly motivate their players to spend more.

The Key Challenge and Context of Running Live Games

It’s important to remember that ultimately running a live game is about changing player behaviour. That is not easy. Think about all the games you tried, but stopped playing. Would you have kept playing if they gave you more hard currency? Had a global chat system? Gave you some epic loot on your first few games? Probably not… The experience needs to be quite meaningfully different for any meaningful change in your behavior.

Animal Jam World Gift, Battle Bay Epic Loot, Marvel Contest of Champions Global Chat

The market, or context, of the ‘game’ of live games doesn’t make it any easier. The market isn’t static; it’s always changing and usually not in a friendly direction. Users you buy are almost certainly going to get more expensive. Users you don’t buy directly are almost certainly going to get more difficult to acquire. Most live, successful games if they stopped improving their KPIs would eventually find themselves in a death spiral as the growing cost of acquiring new users eventually eclipses the revenue each user generates. A decent rate of improvement isn’t merely about thriving. It’s a requirement for survival.

For the live game teams facing these challenges in this environment what’s the best strategy for success? The best strategy is a process that quickly generates learnings about your audience allowing you to get better at identifying the few variables which can change behavior. A properly run live game process gains a learning from every distinct effort. The more distinct efforts (or “experiments”) the team is able to execute--even if almost all of these things don’t work--the more likely the team will be able to identify what will have an outsized business impact next time.

The toughest time for a live game is the release week. Years of work culminate in this moment: the first real chunk of users and data. What follows? Dozens of very experienced and skilled people disagreeing about what to do next. Ultimately the task of running a live game is to develop a process which transforms this exciting, stressful, and unclear situation into a snowballing, defensible competitive advantage.

Theoretical and Practical Framework of Running Live Games

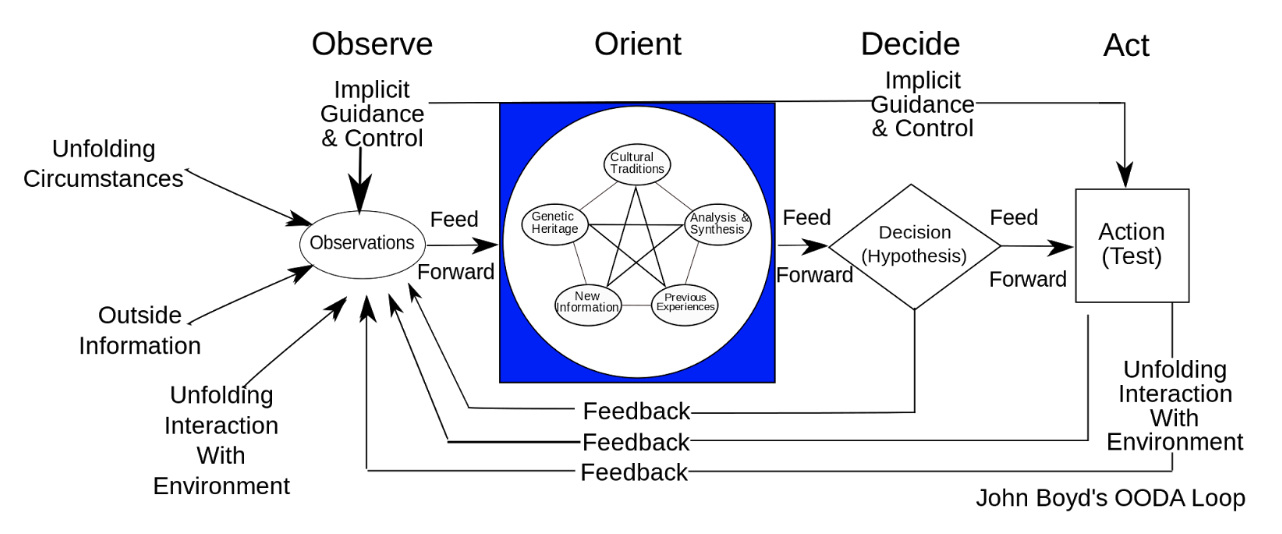

The theoretical framework of a good live game process is the scientific method: an iterative, hypothesis driven approach to learn what motivates and changes the behavior of your audience. A more practical framework comes from the military: the OODA loop. The process of Observing, Orienting, Deciding, and Acting faster and more effectively than the enemy. A key component of the OODA loop is knowing that “orienting”, or understanding, the data is far harder than collecting it. Perhaps even more important is how success tends to occur: an organization that can execute the loop faster, understanding and reacting to challenges more quickly than competitors, wins.

the OODA loop (wikipedia)

Speed is an underappreciated key to successfully running a live game. It’s tempting to wait for more data, re-run tests, and do everything one can to mitigate risks in such a volatile, difficult-to-analyze environment. A conservative approach is slow, however, and generates a relative paucity of learnings. A live game team (and studio culture!) which embraces and maximizes risk will maximize the pace of learning and as a result business growth. Remember, it’s quite difficult to have ANY measurable change in player behavior: positive or negative. A team that moves too quickly and breaks something means the team has identified one of the few variables which drive player behavior! Sure in this case the impact was negative, but as long as the team and tools are in place to quickly mitigate this risk then that learning can be carried forward to improve growth.

How exactly does a well-run live game process work?

The first key process is the inflow of ideas. It’s certainly true there is rarely a shortage of ideas at game studios, but it’s vital the flow of these ideas to the game team is as wide and steady as possible. Every pitch, be it from the CEO or the receptionist, should be taken in and equally considered. This may seem like an odd approach but a process that’s ‘source agnostic’ helps to motivate everyone in the studio to contribute. It also can be helpful when the team is in the sometimes uncomfortable situation of telling the CEO their idea isn’t a good fit.

Day to day this can be hard. Sometimes it’s tiring to continually be pitched and prodded for this idea and that idea. It doesn’t matter if you’re feeling up to it today or not though, every single one of these ideas must be met with an open and considerate mind. In part because plenty of great ideas start out sounding stupid or out of place (especially the most impactful), but primarily because the process breaks down if people stop bringing those ideas to the team. If the inflow drops off because the team is dismissive, the quality of the output is certainly going to suffer as well.

The second, and perhaps most important and most challenging, step is pricing the ideas and forecasting their impact. My preferred process is to have a product manager scope the business impact and the risk this impact isn’t correct and a tech lead scope the rough development cost and the risk this isn’t correct. The result is a clear, concise cost vs. benefit analysis that allows for very different ideas (safe, small impact vs. high risk, high impact) to be compared and contrasted. The product owner chooses the priority from this “menu” for development. Predicting the future is hard, but the point is a proper live game process drives constant improvement as the team learns what truly motivates their audience.

The third step, executing the priority, is a process that has to be managed and accomplished with a live game in mind as well. The right server side controls, analytics, in-game communication, and so on have to be included so the team has the ability to monitor risks, measure results, and keep players informed along the way. New additions have to be carefully rolled out with a limited release and/or A/B tests to ensure the change is carefully monitored and measured. For example, the rewards or benefits should be low at first as it’s far easier to give something more to the players than to take something away.

Finally, with the update live and new data pouring in, the OODA loop starts anew. The team observes the impact of the change and compares that with the predicted impact. Does the data support or refute the underlying hypothesis? If the theory was global chat would make it easier for clans to recruit thus driving up retention then did a meaningful change in retention occur? If not, did it at least successfully increase clan engagement? If not, did the communication of the new feature at least reach most players? The outcome of this is a set of learnings about what works and what doesn’t.

These learnings are primarily for decision makers: those who are pricing the ideas and deciding which is the next priority for development, but one common misstep is not packaging and communicating learnings for wider consumption by the entire studio. This is why someone on the team, ideally the product manager, should be at least a bit of a good storyteller. All the data, analysis, and charts should be reduced and refined to clear learnings that can be shared and understood by anyone in the studio, regardless of their statistics or game design training.

Personally, I hold a weekly numbers review. This process is inspired by the COMPSTAT meetings at the New York City Police Department where the police captain responsible for a neighborhood has to explain any changes in crime data and what they plan to do about it on a regular basis. The numbers review focuses solely on the question, “What did we learn?” All the week’s product & analysis work is reduced into simple, clear stories of what was tried and what was learned from it. The goal of this process is a slow, but steady, improvement in the quality and applicability of the idea inflow from inside AND outside the game team as everyone gains a better understanding of what can truly change player behavior.

As a recap here are my eight key points for running a live game:

- The challenge of running a live game is trying to change people’s behavior and, depending on your available resources and business goals, identifying what change will have the most impact for the most people

- Growth via new players is always going to get more expensive and more difficult

- As a result, the best strategy is a process that quickly generates learnings about your audience allowing you to get better at identifying the few variables which can have a major business impact

- A helpful practical framework is the OODA loop developed by the US military: the process of Observing, Orienting, Deciding, and Acting faster and more effectively than the enemy

- Speed is an underappreciated key to success

- The live game process itself:

- The first step is a robust inflow of ideas and proposals

- The second step is the game team predicting the cost, benefit, and risks of each idea in a way that allows them to be easily compared and contrasted

- The third step, executing and implementing the change, should be done with a live service in mind and the necessary server side controls, analytics, communication, etc.

- Finally the observed impact should be compared to the predicted impact of the change to generate a learning about whether the underlying hypothesis is supported as a means to significantly change player behavior

- Don’t forget the importance of reducing these learnings into simple, clear ‘stories’ and sharing them with everyone in the studio

- A humble, curious culture is the foundation for a best in class live game process